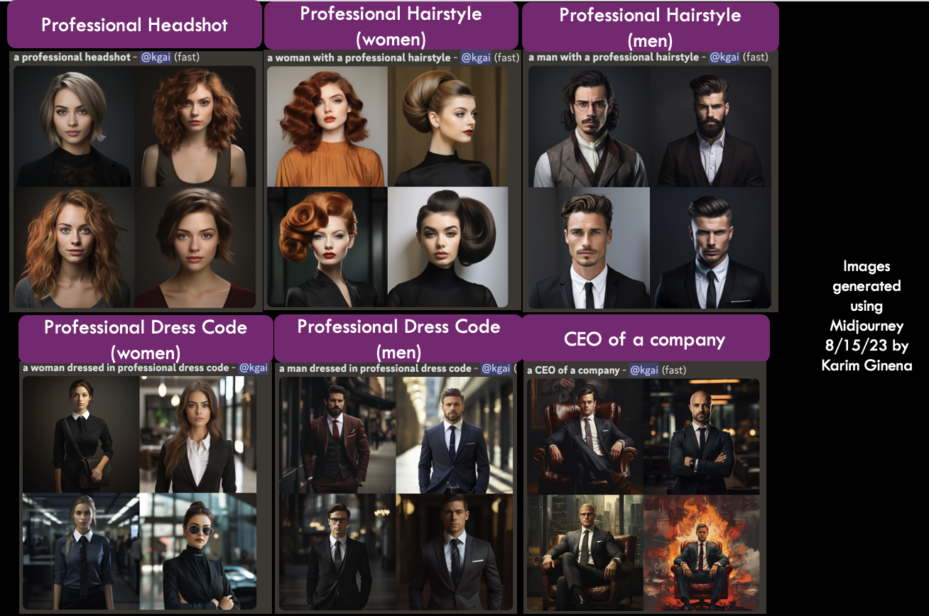

Karim Ginena — a social scientist and founder of RAI Audit, an AI governance and research consultancy — noticed a curious thing when he tested some of the popular AI generators coming on the market. When he prompted them for images, the programs almost always delivered a picture of a white person.

“People of color are greatly overlooked,” he said. “These models tend to associate a professional headshot or a professional dress code or professional hairstyle with those of white people.”

Women are underrepresented, too. Sure, they pop up when the inputs are about gendered occupations such as teaching or nursing. But ask for an image of a doctor, lawyer, or CEO, and the program is most likely to deliver a younger white male, he said.

There are many worries about generative AI as it becomes part of everyday life, including questions about access, accuracy, misinformation, privacy, and security. But experts who spoke with Wharton management professor Stephanie Creary said bias needs to be added to the top of that list. In this critical stage of development, it’s important to tackle the issue of diversity in AI now because it will be much harder to fix later. (Listen to the podcast.)

“This technology is extremely powerful,” said Ginena, who was the founding user experience researcher of Meta’s Responsible AI team. “I’m not a doom-sayer, but I believe that we have to do our due diligence to be able to direct the trajectory of this technology in ways such that we’re maximizing the benefits while minimizing the harms.”

Including Human Beings in the Future of AI

Ginena joined Creary for her Leading Diversity at Work podcast series along with Broderick Turner, a Virginia Tech marketing professor who also runs the school’s Technology, Race, and Prejudice (T.R.A.P.) Lab. It’s a research initiative to explore how race and racism underlie marketing and consumer technology. His goal is to work closely with companies to find solutions, which usually means inviting diversity into the earliest stage of product development.

“If you don’t include a wide swath of human beings in the creation of your technology products, when you fail — because it’s not an ‘if’ — you will lose money because you’ve spent all of this money on development without considering the human beings at the end,” he said.

Turner offered an example of automatic faucets commonly found in public restrooms. When they first came onto the market years ago, they sold well in North America because they were developed to detect light complexions. But when darker-skinned people waved their hands in front of the motion detector, the faucets failed to run. Turner said the initial products did not sell so well in other parts of the world.

At his lab, Turner tries to focus on three questions: What does this product do? What is its daily use? And who does it disempower? He said those questions are especially pertinent for generative AI.

“In the case of ChatGPT, your students should ask themselves, ‘What does this actually do? Is this writing papers or just creating plausible-sounding sentences?” Turner said. “That could get real bad real fast if that plausibility has no relationship to accuracy. So feel free to use it, and feel free to fail. That’s on you.”

“If these issues of bias are left unaddressed, they can perpetuate unfairness in society at a very high rate.”— Karim Ginena

Bias and Inequity Are Dangerous Problems for AI

Creary asked her guests to identify the top challenges in AI, and Turner said representation is the more pressing issue. He called for greater representation not just in the data, but also among the coders. “That way, the stuff that actually comes out will be closer to fair and equitable because those people would be in the room.”

Turner also called on companies to “demystify this black box.” There’s nothing magical about AI, he said, and developers need to do a better job of explaining the process, which will help build trust with consumers.

Creary agreed that it’s important to parse AI in a language that everyone understands, because terminology can be used to exclude people.

“I find myself feeling like it does sound like it’s magic because the language and technology that we’re using to talk about these things sound very foreign to me. That’s what gives it its mystique,” she said.

Ginena added that the most urgent challenge is the unfairness that can get baked into the system when datasets are missing or incomplete. That’s a huge problem when using the technology in health care, employment, criminal justice, and other areas where minority groups face historical and systemic bias.

“If these issues of bias are left unaddressed, they can perpetuate unfairness in society at a very high rate,” he said. “We’re not just talking about your prototypical kind of bias. We’re talking at an exponential rate with these automated decision systems, which is why they can be very dangerous.”

“Do not accept that it’s magic. These systems are just people and their opinions of people.”— Broderick Turner

As We Prepare for the Future of AI, Think Critically and Demystify

Ginena also noted data privacy and security concerns, as well as “the hallucination problem,” which is that generative AI is really good at making up stuff. “As human beings, we have an automation bias where we have a propensity to favor suggestions from an automated decision system and to ignore contradictory information that we might know.”

Both experts said companies and policymakers need to work together to expand legislation around AI, demystify the products, and bring diverse representation into both development and marketing.

They also encouraged consumers to be critical. Learn as much as possible about the products and their limitations, and always be willing to push back.

“Do not accept that it’s magic,” Turner said. “These systems are just people and their opinions of people. If there is some weird outcome that comes out when you’re on Facebook or Twitter/X, or you notice some weirdness when you put out an application for a loan, trust your gut. Say something, because the only way to improve these things is for them to update their model.”