In the past year, you have most likely read a story that was written by a bot. Whether it’s a sports article, an earnings report or a story about who won the last congressional race in your district, you may not have known it but an emotionless artificial intelligence perhaps moved you to cheers, jeers or tears. By 2025, a bot could be writing 90% of all news, according to Narrative Science, whose software Quill turns data into stories.

Many of the largest and most reputable news outlets in the world are using or dabbling in AI — such as The Washington Post, The Associated Press, BBC, Reuters, Bloomberg, The New York Times, The Wall Street Journal, The Times and Sunday Times (U.K.), Japan’s national public broadcaster, NHK, and Finland’s STT. Last year, China’s Xinhua News Agency created the world’s first AI-powered news anchor, a male, using computer graphics. This year, it debuted the first AI female news anchor.

Even smaller outlets are publishing AI-written stories if they subscribe to services that create them, such as the AP and RADAR, which stands for Reporters And Data And Robots. A joint venture of the U.K. Press Association and tech firm Urbs Media, RADAR is an AI-powered news agency that generates thousands of local stories per week for U.K. media outlets that subscribe. Globally, a survey of nearly 200 top editors, CEOs and digital leaders showed that nearly three-quarters are already using AI, according to a 2018 report by the Reuters Institute for the Study of Journalism.

AI lets newsrooms operate more cost effectively since a bot can generate a much larger volume of stories than humans. The AP uses AI to expand its corporate earnings coverage to 4,000 companies from 300. The Washington Post is now able to cover all D.C.-area high school football games, thanks to its Heliograf bot. The Atlanta Journal-Constitution became a finalist for a Pulitzer Prize after investigating sexual abuse by doctors. It used machine learning to scour more than 100,000 disciplinary documents. Reuters’ AI takes government and corporate data to generate thousands of stories a day in multiple languages.

Jeremy Gilbert, the Post’s director of strategic initiatives, said using a bot grew the paper’s election coverage exponentially, in a recent interview with Wharton’s Mack Institute for Innovation Management. “Instead of turning out one story the morning after the election … during the primary night, we count the votes and Heliograf can report every 30 to 90 seconds what is going on, who’s ahead, who’s behind, etc.,” he noted. In a statewide race, “we can compare turnout from 2018 to 2016 every 30 to 90 seconds. As new data comes in, we can publish a new story.”

“Until the day AI is much better than humans in judgement and interpretation, we will need human interpretation of facts, events and news stories.” –Pinar Yildirim

At Bloomberg News, its Cyborg bot can scrape earnings reports to spit out headlines and a bullet-pointed story very quickly. “That first version is very close to what [a human reporter] would have done in 30 minutes minus, of course, the quote from the analyst,” said Editor-in-Chief John Micklethwait during a speech at the DLD (Digital Life Design) Conference in January. AI also can scour global social media quickly and at scale to spot posts about disasters, shootings, resignations, and other newsworthy events. In some form or fashion, automation now touches 30% of all Bloomberg content.

BBC’s AI tool is Juicer, which is a news aggregation and content extraction tool. It watches 850 RSS feeds globally to take and tag articles at scale. Reuters’ News Tracer combs social media posts to find breaking news and rates its newsworthiness. It verifies the news like a journalist would, looking at the poster’s identity, whether there are links and images, and other factors.

Non-media firms are also using AI to generate news articles. In 2017, Quartz executive Zach Seward gave a speech at a conference in Shanghai, and before he even left the stage, Chinese tech firm Tencent already posted an article — translated into Chinese — about his talk using an AI called Dreamwriter. He tweeted that it was a “not-half-bad” news story.

Will Robots Replace Reporters?

Newsrooms are accelerating AI efforts that began years ago. Back in 2014, Noam Latar, dean of the Sammy Ofer School of Communications at the Interdisciplinary Center in Herzliya, Israel, had been conducting research on AI in journalism and predicted that the technology would catch on in media because of its cost savings potential, according to an interview with Knowledge at Wharton.

But as use of AI rises, concern also grows about its impact. “We have … a new spectre haunting our industry — artificial intelligence,” said Micklethwait, echoing the opening sentence of Marx’s Communist Manifesto. He said journalists are afraid that they will be replaced by robots, the quality of writing will decline and fake news will proliferate.

But Micklethwait disputed this scenario. “Some journalists will be replaced by robots but not many of them. News will get more personalized but not always in a bad way,” he said. “We will still sometimes get hoodwinked by fraudsters and by autocrats, but to be honest that has always happened.” However, AI does up the ante for the beat reporter to deliver more insights or break news.

Indeed, a human journalist who delivers meaningful analyses or interpretations can blow away AI. “AI will generate some news stories, but a big part of journalism is still about pluralism, personal opinions, being able to interpret facts from different angles,” Wharton marketing professor Pinar Yildirim told Knowledge at Wharton. “And until the day AI is much better than humans in judgement and interpretation, we will need human interpretation of facts, events and news stories.”

“It sounds like a human, reads like human reporting, but it’s just … a template-based approach to various narrative styles.” –Seth Lewis

What AI does is take over routine reporting so journalists have time to pursue more interesting stories, said Meredith Broussard, an assistant professor at New York University’s Carter Journalism Institute, on the Knowledge at Wharton radio show on SiriusXM. “Robot reporting is not going to replace human journalists any time soon,” she said. “AI is very good for generating multiple versions of basically the same story. So, it’s really good in financial reporting where you’re talking about earnings reports, and the stories don’t really change all that much.”

AI works best for stories that use highly structured data, added Seth Lewis, chair in emerging media at the University of Oregon School of Journalism and Communication, who also spoke on the radio show. Sports stories are a good example. “Because of automation, [the AP, for example] can create stories that turn those box scores into narrative news,” he said. “It sounds like a human, reads like human reporting, but it’s just … a template-based approach to various narrative styles.”

At RADAR, journalists develop templates for AI to fill in with local data. These templates include “fragments of text and logical if-then-else rules for how to translate the data into location-specific text,” according to its website. Data journalists have to come up with various angles and storylines, and then do some reporting to “add broad-strokes background information and national context, which are written into a template with a basic story structure.” The result is stories with a similar core structure but with local details. Using AI, one data journalist can produce around 200 regional stories for each template every week, RADAR said.

Human intelligence guides AI at Bloomberg as well. “We need journalists to tell Cyborg what to do,” Micklethwait said. “We need humans to double-check what comes out. … We can’t afford to be wrong. A lot of this is, in fact, semi-automated. … It tends to be humans and machines working together.” AI enhances the reporter’s work, too. For example, it can take over laborious tasks such as transcription of recordings, using voice recognition technology. And a bot can join multiple news conference calls at once, listening for specific words to see if there’s breaking news.

One big game changer in AI is automatic translation, Micklethwait said. Foreign correspondents typically report in one language and write in another — say, conduct an interview in Mandarin and write the article in English. But with fast machine translation, “reporters break news in whatever language they prefer and computers mostly translate it,” he said. The reporter vets the final version before submitting the story. While bots do make mistakes — say, it may confuse company ties with neck ties — they don’t repeat the same error, Micklethwait said.

Artisanal News?

Yildirim said there’s another reason why AI cannot replace quality news written by humans: Research shows that people don’t consume media “in a purely rational way.” Consumers want bells and whistles, and a point of view they agree with, whether they explicitly acknowledge it or not. According to a 2013 research paper she co-authored, people want information that conforms to their beliefs, which drives their choice of news outlets. “This theory suggests that consumers do not want to hear the naked truth alone,” Yildirim said. “They want to hear news which appeals to their belief system — their version of the world.”

“[Creating] news is not the same as producing a car on an assembly line.” –Meredith Broussard

For example, let’s say President Trump’s poll numbers show that 40% of people support him one month, and it rises to 45% the next month. “These uncontestable facts can be interpreted very differently by different journalists, and will appeal to consumers with different belief systems,” Yildirim said. Right-leaning outlets would report that Trump’s support is increasing while left-leaning media would say his support remains under 50%. “They would both be correct [but have] different interpretations from the data.” For AI to replace journalists, these algorithms better be able to generate the news that people want to hear, she added.

In reality, AI-written news stories are actually quite repetitive since they come from templates, Broussard said. “Every single college baseball story based on structured information is basically the same, so you’re going to get the same story over and over again,” she said. “And you have to think: Do you really want that? … So, there’s a point of diminishing returns with AI-generated news. It’s really nifty, but it has very limited utility.”

Another issue is that AI introduces new legal implications into journalism that haven’t been sorted out yet. “If an automated story were to defame an individual, who’s responsible? And what does that look like?” said Lewis, who has researched libel issues in AI-generated stories. “I don’t think we’re quite ready to figure out ways of suing algorithms. Ultimately, humans are going to be responsible, one way or another.”

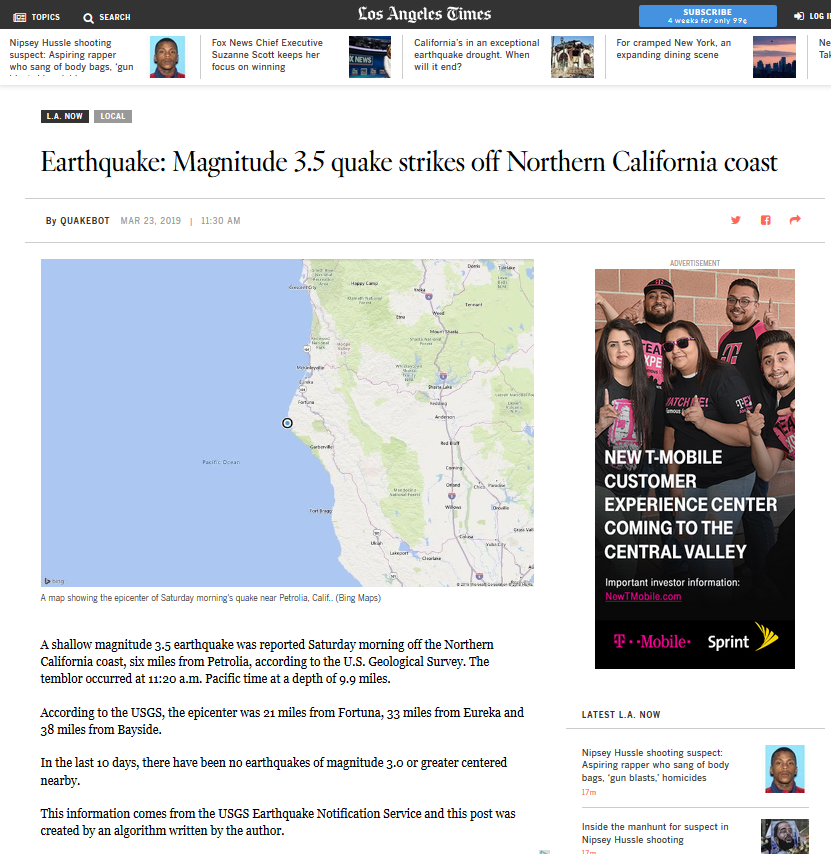

Reporting at lightning speed has its drawbacks as well, as The Los Angeles Times found out in 2017. Its Quakebot published a story and a tweet about a massive 6.8-Richter scale earthquake that had struck Isla Vista, Calif. But the temblor actually occurred in 1925. The U.S. Geological Survey was updating its database of historical earthquakes and sent an incorrect alert, which the bot spotted and automatically reported one minute later.

AI’s problems make Broussard skeptical that journalism is marching towards a fully automated future. “I would question that assumption because [creating] news is not the same as producing a car on an assembly line,” she said. Initially, the ability to produce voluminous content might be exciting to newsrooms, but it’s actually the equivalent of junk food. “What I want us to think about is … what would it look like to have a ‘slow food’ movement for news?” she asked. “What if we think about smaller-batch, artisanally produced news? It’s more expensive. It’s not as efficient, but it’s actually better.”

Added Lewis: “It’s kind of worth asking, is this just a race to the bottom, in terms of producing masses and masses of content through automation? … Or, is it better for news organizations to actually think about doing less? Producing less news, but making it better, or making it somehow more value-added.” He urged the media to value quality over quantity — produce stories that are “more investigative, more original, more creative in a way that would actually meet consumers’ needs and not be part of this kind of race to the bottom that I think we’re seeing.”